Cursor

4.64 / 5

Fastest delivery, best architecture, and the smoothest iteration workflow.

Claude Code

4.75 / 5

Best playable result and strongest visual polish, but a major time penalty.

OpenAI Codex

4.55 / 5

Solid mechanics, quick delivery, and a reliable result with less workflow polish.

TL;DR

All three platforms produced a working browser game from a single prompt. Cursor won the full benchmark once time and iteration workflow were included. Claude Code produced the best-looking and most complete game, but took 22 minutes 20 seconds. Codex delivered the most balanced result: playable, fast, mechanically strong, but less visually polished and less seamless in file handling.

The Prompt

The test used a single controlled prompt asking each platform to generate a complete browser-based arcade game called Jumping Jim. The requirements were intentionally practical: HTML, CSS, and JavaScript only; one runnable HTML file; movement, jumping, hazards, collectibles, scoring, lives, collision detection, and requestAnimationFrame-based animation.

The prompt also offered extra credit for browser audio, increasing difficulty, multiple levels or procedural generation, and mobile-friendly controls. That made it a useful test of both instruction following and creative initiative.

Single prompt excerpt

Create a complete browser based arcade game called Jumping Jim inspired by classic platformers similar in style to Pitfall.

Use HTML, CSS, and JavaScript only. The game must run by opening a single HTML file. Include player movement, platforms, hazards, collectibles, score, lives, gravity, collision detection, and smooth animation.

Methodology

Each platform was run in a fresh session using the same prompt, with no clarification or prompt refinement. Outputs were tested locally in a desktop browser and scored across ten categories from 0 to 5. Time to completion was tracked separately as an additional 0 to 5 workflow metric. To keep the published comparison easy to read, all headline totals below are normalised to a 5-point scale.

| Test rule | How it was applied | Why it matters |

|---|---|---|

| Identical prompt | Same game brief pasted into all three platforms | Lets the output reflect platform behaviour rather than prompt tuning |

| Single-file constraint | All code had to ship in one HTML file | Tests code organisation under realistic constraints |

| Browser test | Each result was run locally without major fixes | Separates runnable software from impressive-looking code blocks |

| Time recorded | Wall-clock time from prompt submission to complete output | Captures real workflow cost, not just final quality |

Final Rankings

| Rank | Platform | Core quality | Overall incl. time | Time taken |

|---|---|---|---|---|

| 1 | Cursor | 4.60 / 5 | 4.64 / 5 | 1 min 2 sec |

| 2 | OpenAI Codex (GPT-5.4) | 4.50 / 5 | 4.55 / 5 | 1 min 15 sec |

| 3* | Claude Code (Sonnet 4.6) | 4.75 / 5 | 4.41 / 5 | 22 min 20 sec |

*Claude Code ranked third when time was included, but first by core output quality alone.

Score Breakdown

| Category | Claude Code | Cursor | Codex GPT-5.4 |

|---|---|---|---|

| Time score | 1 | 5 | 5 |

| Functional completion | 5 | 5 | 5 |

| Code quality | 4.5 | 5 | 4 |

| Game mechanics | 5 | 4 | 5 |

| Creativity and design | 5 | 3 | 4 |

| Performance | 5 | 4 | 5 |

| Instruction following | 5 | 5 | 5 |

| Error rate | 5 | 5 | 5 |

| Completeness | 5 | 5 | 5 |

| Extra features | 5 | 5 | 4 |

| Iteration capability | 3 | 5 | 3 |

The Games

The best playable artifact

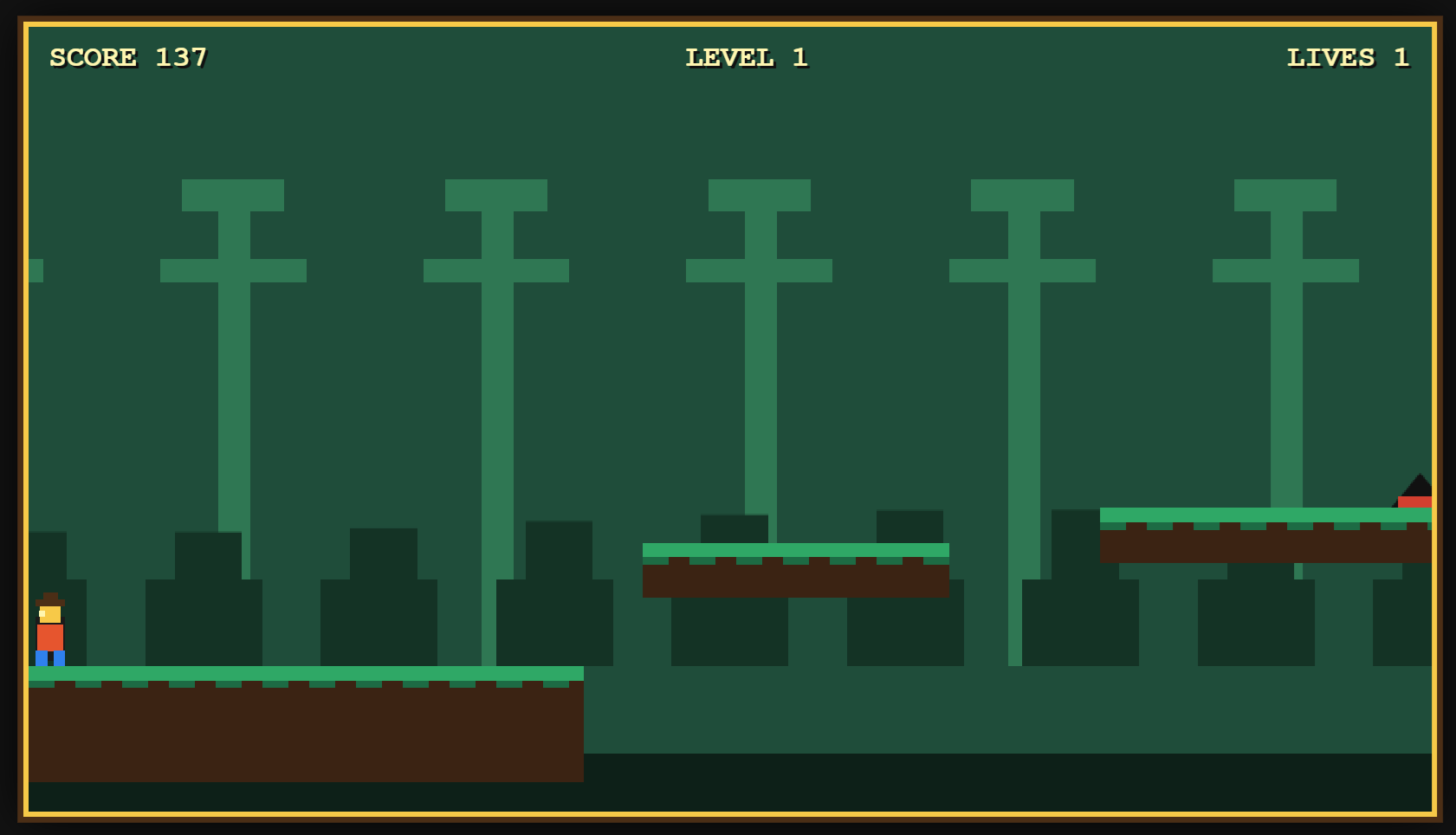

Claude Code produced the strongest visual output: layered trees, grass terrain, floating platforms, collectibles, hearts for lives, a timer, level display, audio, and mobile controls. It looked and felt like the most complete game.

Best for polished demos. Weakness: the 22 minute 20 second generation time is a serious workflow cost.

The best engineering workflow

Cursor produced the best code architecture and completed in just 1 minute 2 seconds. Its output included named constants, encapsulated systems, declarative level data, coyote time, jump buffering, seeded procedural generation, and accessibility attributes.

Best for rapid prototyping and maintainability. Weakness: the visuals were basic and movement speed was hard to control.

The balanced middle option

Codex delivered a playable and responsive game in 1 minute 15 seconds. The output had strong mechanics, clean collision behaviour, pine silhouettes, gems, spikes, pits, score, lives, level text, and difficulty scaling. It did not look as polished as Claude Code or feel as architecturally refined as Cursor, but it landed a strong middle position.

Best for quick, well-rounded output. Weakness: scattered magic numbers, a heavier update function, and manual copy/paste friction reduced the iteration score.

What The Test Revealed

01

Runnable output is now table stakes

All three platforms produced working browser games with no major manual fixes. That is a meaningful maturity signal for constrained single-file software prompts.

02

The best output and fastest workflow were different tools

Claude Code created the strongest artifact. Cursor created the strongest workflow. That distinction matters when choosing a coding assistant for real projects.

03

Architecture and aesthetics still trade off

Cursor prioritised maintainable structure over visual presentation. Claude Code pushed presentation harder. Codex stayed between the two.

04

Iteration workflow is part of quality

For vibe coding, output quality is not just the final code. File handling, update speed, and whether the assistant reduces friction all change the practical score.

Practical Recommendation

Choose Cursor when

Speed and maintainability matter

It is the best fit for rapid prototyping, iteration-heavy workflows, and code that may be extended beyond the first generated artifact.

Choose Claude Code when

Presentation matters most

It produced the most impressive playable game, making it a strong choice for demos, visual prototypes, and user-facing proof-of-concepts.

Choose Codex when

You want balance

It is a reliable middle option: fast, playable, mechanically solid, and good enough visually, though less seamless in this particular workflow.

Do not ignore

Time as a quality signal

A brilliant output that arrives too slowly can lose to a slightly less polished result that supports fast iteration and keeps momentum high.

Bottom Line

This benchmark shows that the current generation of AI coding tools can produce complete, playable browser games from a single natural-language prompt. The failures were not about basic runnable code. They were about workflow velocity, polish, tuning, and maintainability.

If the question is which tool made the best game?, the answer is Claude Code. If the question is which tool performed best as a practical coding workflow?, the answer is Cursor. If the question is which tool delivered the most balanced result quickly?, Codex makes a strong case.